How I Turned My Colleagues Into Markdown Files

I've been running my coworkers as AI prompts for a few weeks now.

Not to replace them. To summon them at midnight when I'm not sure if my code is actually good or just "works on my machine" good.

This started with one question: what would Sam say about this code?

It Started With Sam

I was reviewing my own PR at 11pm, and I thought: "What would Sam say about this?"

Sam is our precision guy. Types, naming, DRY—if it could be tighter, Sam would find it. He once left a comment: "Can we be stricter here?" on a function that worked perfectly fine.

So I extracted it. What Sam looks for. How Sam thinks. The exact phrases Sam uses when he's found something that could be better.

Then I fed it to an AI with my diff.

It complained about my variable names. Just like Sam would.

That's when I realized: I've sat in enough code reviews with these people. I know their patterns. I know what they catch that I don't.

So I wrote them all down.

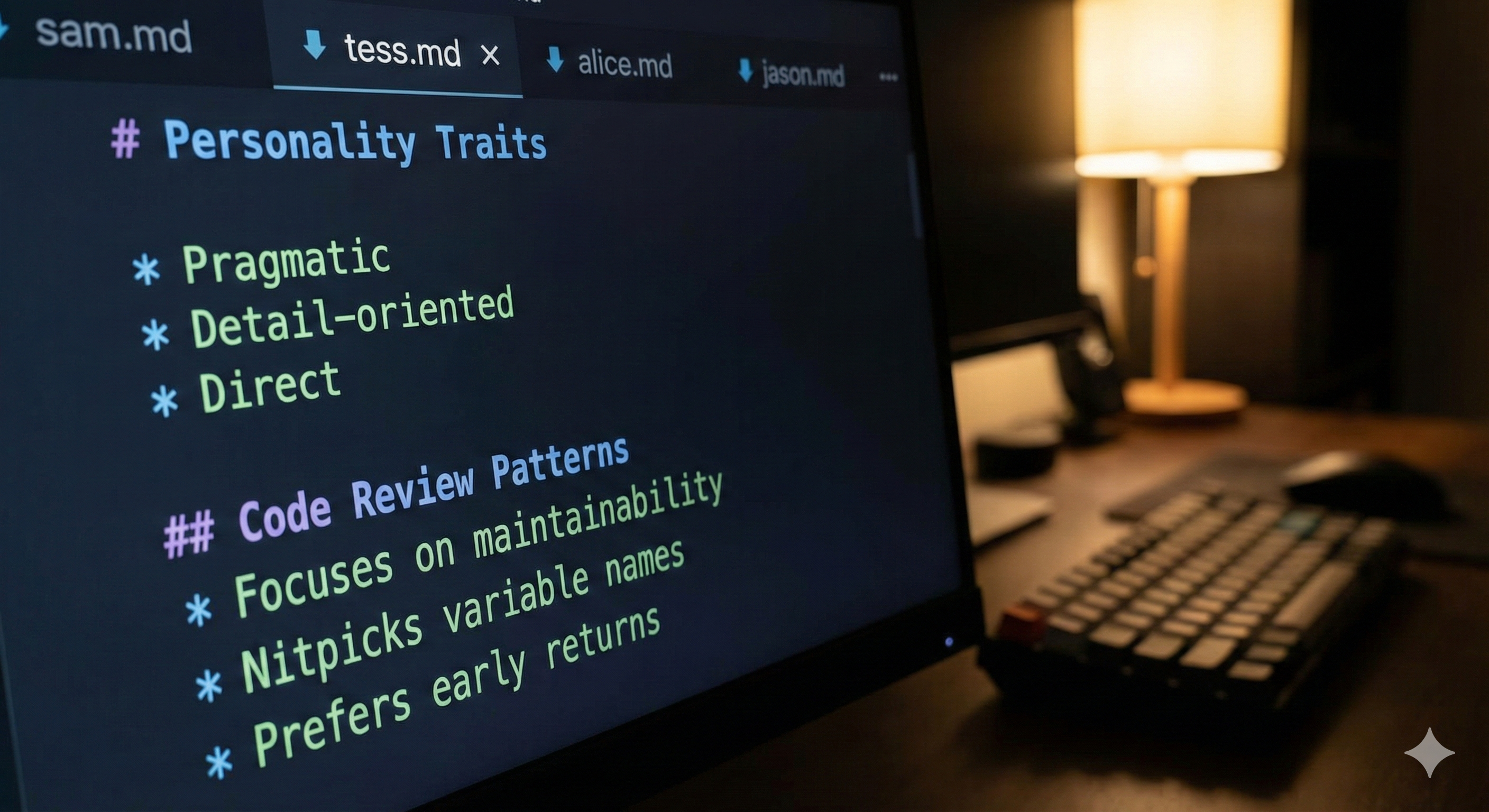

How I Actually Built Them

I didn't just write these from memory. That would be a vibe, not a system.

I went through three years of GitHub PRs. For each colleague, I filtered to reviews they'd given, not PRs they'd authored. Their reviewing voice, not their coding voice.

For Sam, that was 847 reviews. I fed them to an AI and asked: "What patterns do you see? What does this person always comment on? What phrases do they use repeatedly?"

The AI found things I hadn't consciously noticed:

- Sam uses GitHub suggestion blocks for code fixes. Collaborative, not demanding. "Can we be stricter here?"

- Tess asks "just curious: why...?" and "maybe a stupid question - but..." Questions data integrity, not accusations.

- Alice is terse. "Done." "Fixed." "Intentional." "Lies" (when code doesn't match comments).

- Jason catches typos and parameterization issues. "100 or 10 typo?" "Should this be configurable?"

The prompts aren't my interpretation of my colleagues. They're data.

Then I Wrote One for Myself

This is the weird part.

Not because I think I'm a great reviewer. Because I wanted to see what I actually focus on. Turns out I have patterns too.

I'm the "is this actually used?" person. Error handling over asserts. Cleanup that got forgotten. Code that's doing more than it needs to. My reviews are full of "why this change?" and "can be done in a subsequent PR though :)" — pragmatic, not perfectionist.

What synthetic Bristena catches? Over-engineering. Dead code. Asserts that should be proper error checks. When I'm about to add complexity, synthetic me asks "is this actually used?" and half the time the answer is no.

Having a "Bristena" prompt alongside the others also shows my blindspots. Synthetic Tess finds domain issues. Synthetic Sam finds naming issues. Synthetic Bristena finds... unnecessary complexity. Turns out I'm decent at simplifying but sometimes miss the big picture stuff.

The Cast

I started with five from my backend team:

Sam — The Collaborator. Type safety, naming precision, DRY. His reviews use GitHub suggestion blocks—collaborative, not demanding. "Can we be stricter here?" "What's your thinking on...?" Occasional dry humor: "Thanks, I hate it!"

Tess — The Domain Expert. Deep knowledge of medical data, EHR systems, DICOM. Curious, not accusatory: "just curious: why...?" "maybe a stupid question - but..." Catches data integrity issues everyone else misses.

Alice — The Architect. Layer boundaries, state machines, module organization. Terse. "Done." "Fixed." "Intentional." "Lies" (when code doesn't match comments). Doesn't care if code works—cares if it's in the right place.

Jason — The Detail Catcher. Parameterization and typos. Catches what everyone misses: "100 or 10 typo?" "Should this be configurable?" Concise and practical.

Bristena (me) — The Pragmatist. Error handling, cleanup, questioning unclear code. "is this actually used?" "why this change?" "can be done in a subsequent PR though :)" Over-engineering radar always on.

Then I expanded to another engineering team. Seven more colleagues, seven more markdown files:

Dan — The Copy Perfectionist. "Update copy to:" is his catchphrase. Exact wording matters. Signs off with "Beautiful 💅"

Maya — The UX Thinker. "Just out of curiosity, why did you go for 4hrs exactly?" Cares about naming precision—if a function is called fetchResults but constructs them, the name lies.

Marco — The Tech Lead. 814 PRs reviewed. "Can you add a Zod check here?" Uses "nit:" liberally. My favorite: "Approving with dissent:" when he approves but registers disagreement.

Liam — The Cleanup Advocate. Reviews are often just "🧹". Hates nested ternaries. Solution: extract to a component.

Rachel — The Visual Verifier. "Can I see screenshots?" Enthusiastic ("Niiice!") but thorough. Colors from theme, not hardcoded.

Kai — The Pragmatist. "noice", "seems legit", "all gucci fam". Casual tone, but catches edge cases. Favorite suggestion: "can we just use lodash?"

Oscar — The Testing Champion. 881 PRs. "This area desperately needs tests 🙈". Explains the why, links to docs. Won't let untested code pass.

The Council

For important PRs, I run my "council" of reviewers. Parallel, independent, each doing their thing.

The output is structured by severity:

- 🔴 Blocking: Oscar wants tests, Marco wants validation

- 🟡 Suggestions: Liam wants component extraction, Maya flags naming

- 🟢 Nitpicks: Dan has copy tweaks, Kai suggests lodash

Vote tally. Final verdict. Ship or fix.

The council has override rules: if the plan requires a pattern that a reviewer flags, it's marked "plan-justified." This prevents false positives where Alice flags "architectural debt" that's actually intentional design.

They Know

I told a few colleagues. Not all of them—some would find it weird, some would overthink it.

The ones who know thought it was hilarious.

Sam immediately wanted to review his own prompt. "I don't say 'Can we be stricter here?' that often, do I?" (He does.)

Tess saw hers and laughed. "Scarily accurate." Then, half-joking: "The company just needs you and your AI friends now."

Alice's response: "Accurate." (On-brand.)

Dan sent me a 💅 emoji. No words.

Kai's response: "Can just go on holiday and let this AI pretend to be me. Scary good."

The Uncomfortable Truth

Writing these prompts taught me how little I was learning from code reviews.

I sat in reviews with Sam for two years. But until I ran his reviews through an AI, I couldn't articulate his process. I knew he cared about precision. I didn't know he always used suggestion blocks, asked "Can we be stricter here?" on every PR, turned type safety into a collaborative conversation.

Forcing myself to extract my colleagues' patterns made me actually study them. And studying them made me better.

It's awkward to admit. These people were teaching me every code review, and I was only half-listening until I tried to turn them into markdown.

Build Your Own

Start with one colleague. The one who catches things you miss.

- Observe their patterns — What do they always check? What questions do they always ask?

- Capture their format — Tables? Emojis? What's their catchphrase?

- Write the prompt — Personality, checklist, communication style, output format

- Run it on your code — Before you open the PR. See what it catches.

You'll get two things: an AI that catches some of what your colleague catches, and a better understanding of what makes them effective.

The second thing is worth more.

What Happened Next

My council of reviewers was comprehensive. But I kept finding gaps—performance issues, over-engineering, async bugs.

So I added generic experts: Performance Engineer, Async Expert, Over-engineering Detector. The panel grew to 15.

Then I hit false positives. Reviewers flagging things that were intentionally designed that way.

That's a whole other story—how I fixed the false positive problem by making reviewers plan-aware. I wrote about that in The 15-Expert Code Review Panel.

But it all started here. With Sam. With a markdown file. With the question: "What would my colleague say about this code?"

Turns out, if you pay enough attention, you can write it down.

Names have been changed. The patterns are real.

Who's the colleague whose reviews taught you the most? What do they catch that you don't?